The Moral Machine - an interactive experiment.

Can we trust AI-operated machines to make moral decisions about life and death situations on our behalf?

Or better question yet, can we trust humans to teach AI how to make such decisions?

As we advance the capabilities of artificial intelligence and begin to integrate it into machinery, there raises a concern about how autonomous machines such as self-driving cars will be able to make moral decisions when it comes to the physical safety of humans. After all, if it is up to a small group people to program the AI, and we know that individual biases can sneak into the code, can we safely trust AI to make the most ethically correct calls when lives are on the line? What if it wasn't a small group of developers guiding the AI, but rather a collective effort by every person who wants to contribute to the AI's moral development? What if YOU were in the place of this AI?

These are all questions that MIT's project, The Moral Machine, is here to address:

** Test-drive the Moral Machine here. **

Moral Machine is an online experiment designed to let everyone to examine their own ability to asses situations and experience what it is like to make a tough call where both outcomes are inevitably fatal. The interactive platform will present you with 13 different situations that involve people of different gender, age, social status, etc. where you can imagine yourself as the AI of an autonomous vehicle facing a moral dilemma of whose life to spare in an even of impending traffic collision.

What would you do?

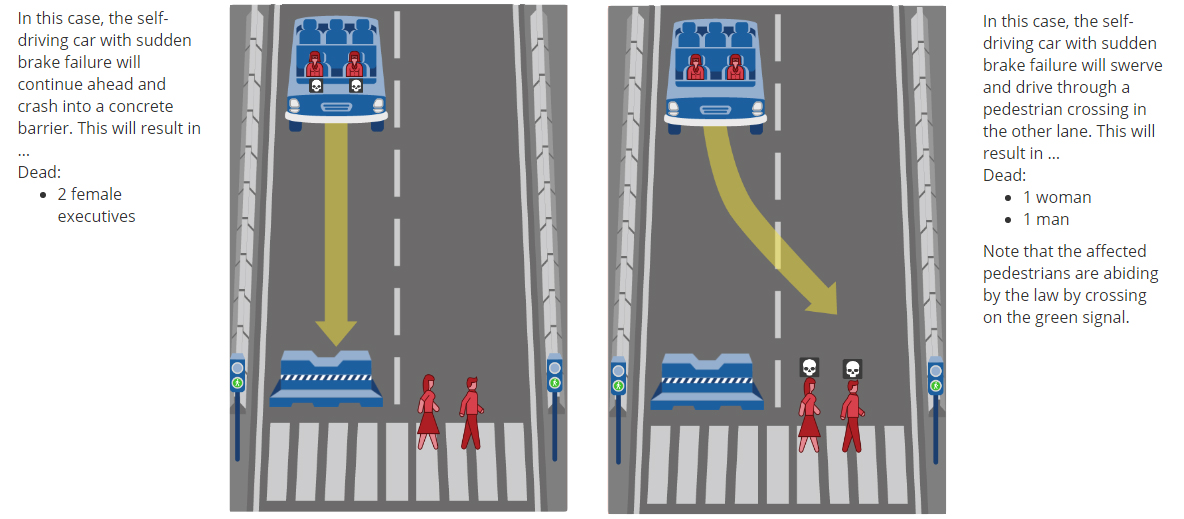

For example, what would be the morally correct choice for an AI controlling this vehicle to make, given this scenario:

If two people die regardless of the outcome, should the AI even intervene? And if yes, then does the gender or the social status of the people involved has to be factored in the decision? Or should the car itself be a factor and prefer its occupants when the amount of victims is the same in either case?

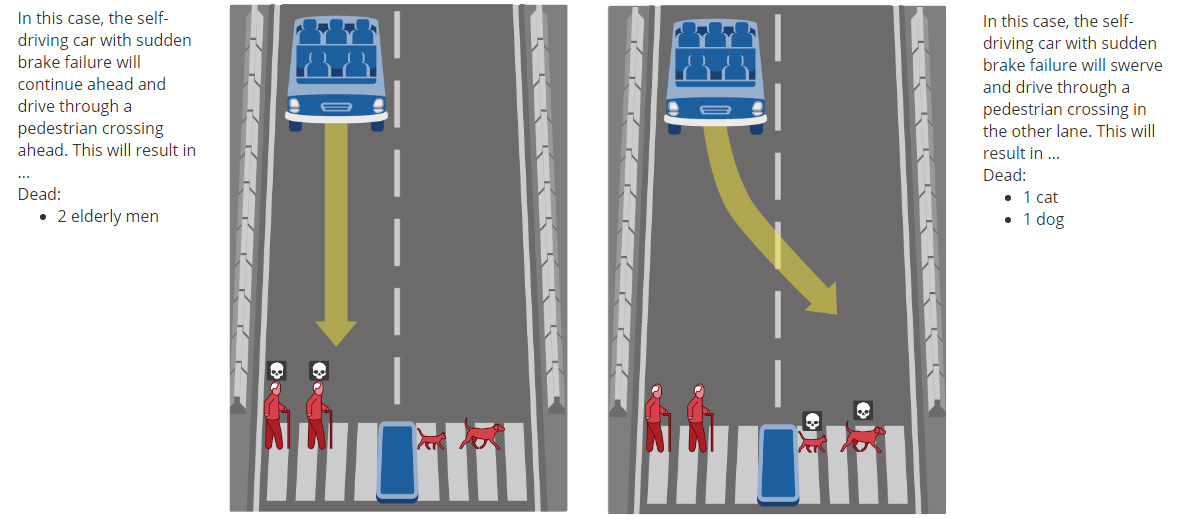

Some scenarios are fairly easy...

... Unless you are an animal lover.

Then again, even if you - as an individual - prefer to spare animals, should you (as the AI) do the same? This is where it gets really difficult, because we begin to understand that we have to let go of our personal biases in order to generate the most ethical AI, and in doing so, we also might want to face our own patterns of judgement and how they impact our decision making (outside of this experiment).

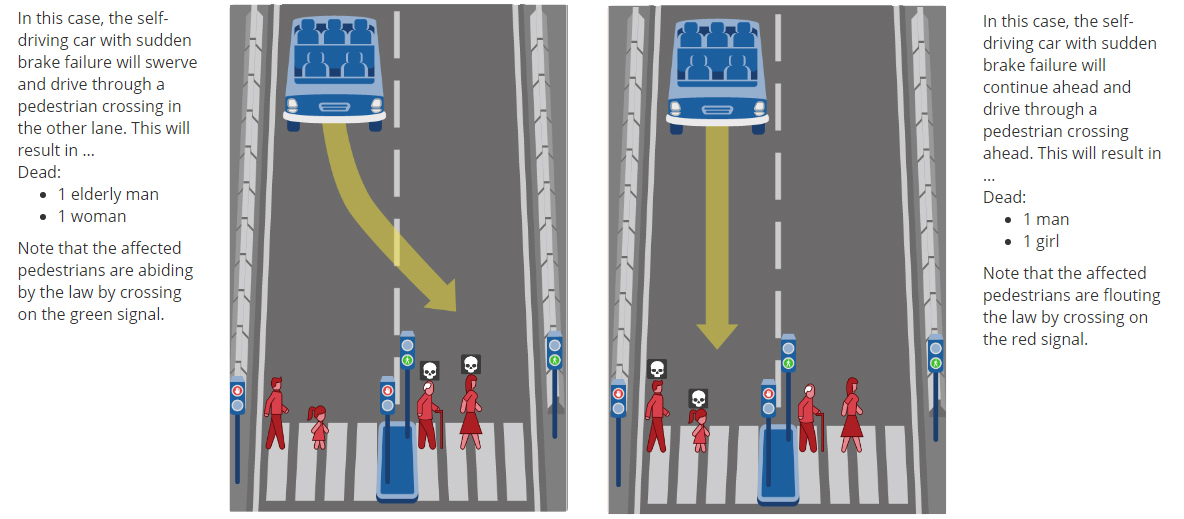

Some random scenarios are incredibly difficult to call:

This took me a while longer to consider... luckily the AI will be able to do the same, but 50 times faster - Deliberate action vs. deliberate inaction, old vs. young, female vs. male, lawful vs. flouting... in a situation like this I lean towards deliberate inaction, while someone else might say that when children are involved, all bets are in their favor.

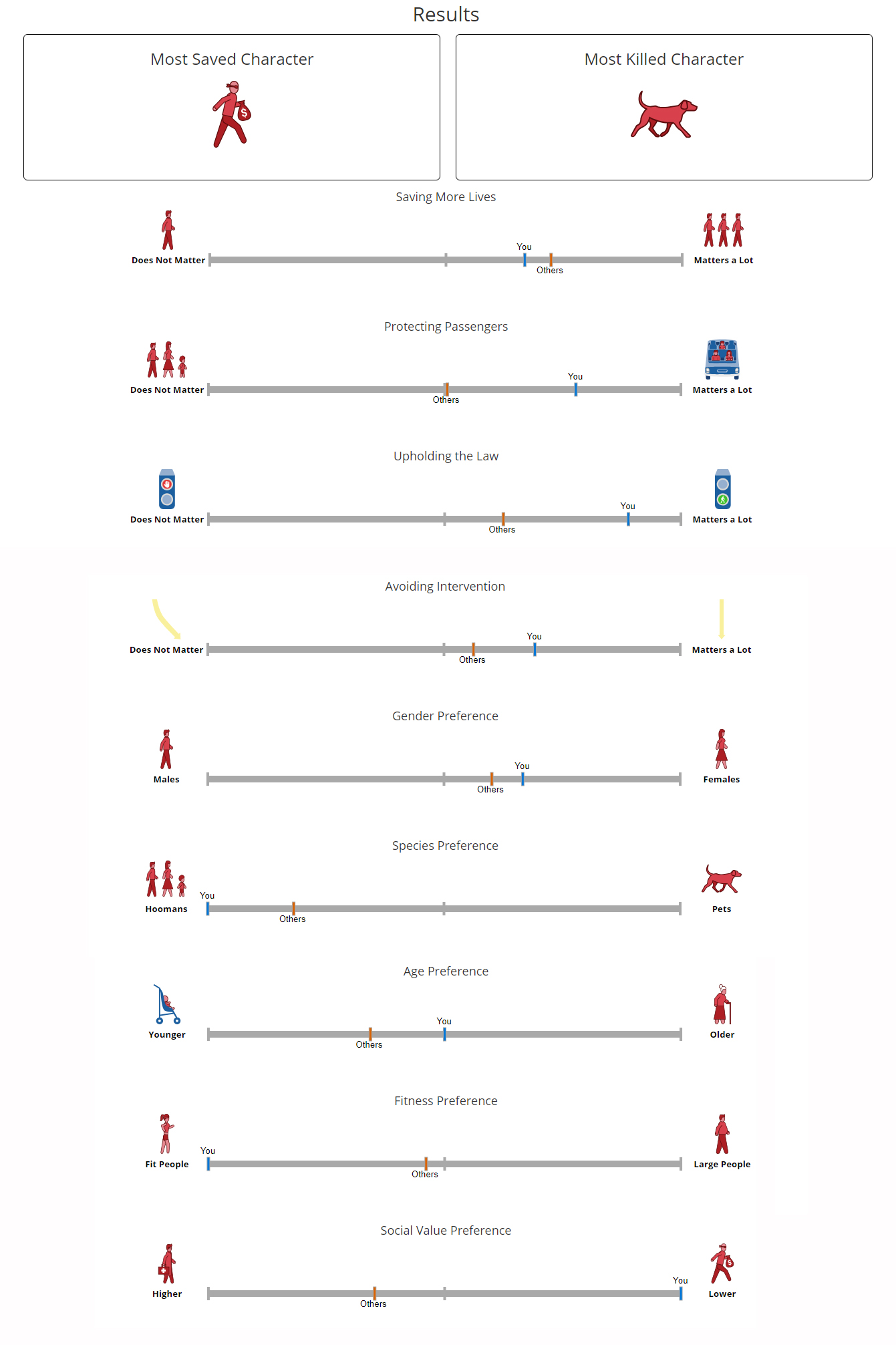

Your Moral Score

At the end of the 13 scenarios you will discover what your preferences were like (compared to what most other people picked). This is a very interesting part, because it reveals biases that you might not even knew you had. For example, you might learn that in this particular set of circumstances you didn’t care about the number of victims, or that you gave preference to people with better health... or that you simply didn’t even factor in that notion...

But is it your true moral score? Not unless you yourself deem it as so. You have to do the test several times to spot a pattern, and even then don’t forget that this is a hybrid between your moral stance and the kind of moral that you might expect from an AI (which can be two different things).

I've done this test several times and my results varied after every test, so I wasn’t able to determine any consistent preference for people’s physical attributes (like age, fitness, etc)... but I did have an above average preference for people that crossed the road on green light, and I also had preference towards inaction - the adorable nihilist me. I also seem to have preference towards the vehicle itself, mostly in situations that involve the same amount of victims (I use the car to offset the scales)

Humanity will fail AI first.

After the test is complete you get the option to answer a few personal questions to help further the AI’s understanding… You can input your age, gender, level of religiosity, political alignment, etc.

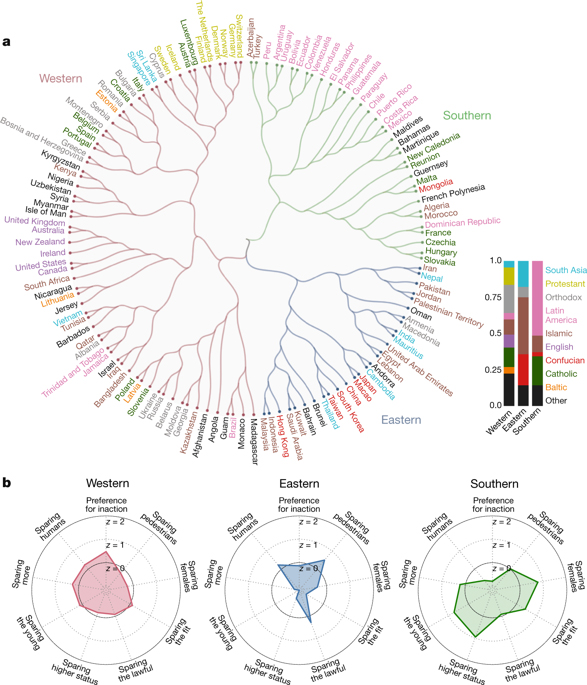

This turned out to yield some very interesting statistics.

For example, I found a figure in Nature: Journal of Science showing how ethnicity and culture in various parts of the world had an effect on people’s preferences and hence their idea of moral AI (after performing this exact test). In the Western world, preference seems to veer towards non-intervention, while all other factors are more or less balanced… in the Southern region, there seems to be preference towards sparing people of higher social status, and in the Eastern countries there is an alarming almost negative preference towards sparing the young or sparing the greater number of people...

This figure shows the clear danger of what an AI might look like if it is developed in one exclusive side of the world under an influence of a particular culture and its unique moral standards. This, to me, is clear a sign that we are not ready to face the technology of tomorrow with yesterday's morality that is not universally accepted. If our culture can have such a profound effect on how we develop AI, then it will not be the AI to blame for failing, but the humanity that failed to cooperate to begin with.

Closing Thoughts

Although it is not clear if this experiment will directly be used to develop AI, If you believe that the dawn of autonomous machines is on the horizon, I highly recommend that you visit the Moral Machine site and try the test to help further the AI's understanding of moral decision making, but most of all your own!

Let me know what you think and please feel free to share your experience in the comments, I would love to discuss, debate or simply ravel in the sweet nihilism of it all :D

- A N K A P O L O

Comments